A RAG pipeline is the data and retrieval architecture that lets an AI system answer from approved knowledge. For enterprise AI integration, the pipeline needs to do more than push documents into a vector database. It must preserve context, permissions, freshness, citations, and operational control.

The difference between a demo and a production RAG system is the quality of the pipeline. A demo can ingest a folder and answer a few sample questions. A production system has to keep working as documents change, users ask messy questions, permissions shift, and leadership expects trustworthy answers.

The Core RAG Pipeline

- Ingestion: collect content from document repositories, databases, APIs, file systems, and knowledge bases.

- Extraction: convert files into clean text while preserving tables, headings, metadata, and source relationships where possible.

- Chunking: divide content into useful retrieval units without losing context.

- Embedding: generate vector representations that make semantic search possible.

- Indexing: store vectors, source text, metadata, permissions, and source links.

- Retrieval: match user questions to the most relevant approved source material.

- Generation: produce answers from retrieved context with citations and task-specific instructions.

Enterprise Requirements That Change the Design

Enterprise RAG system development introduces constraints that are easy to miss in early pilots. Users may have different access rights. Some documents may be stale or superseded. Source systems may use inconsistent metadata. Some answers may require citations. Some workflows may require human review before the answer is used.

The architecture should include permission-aware retrieval, source freshness checks, metadata normalization, logging, evaluation, and clear rules for what happens when the system is uncertain.

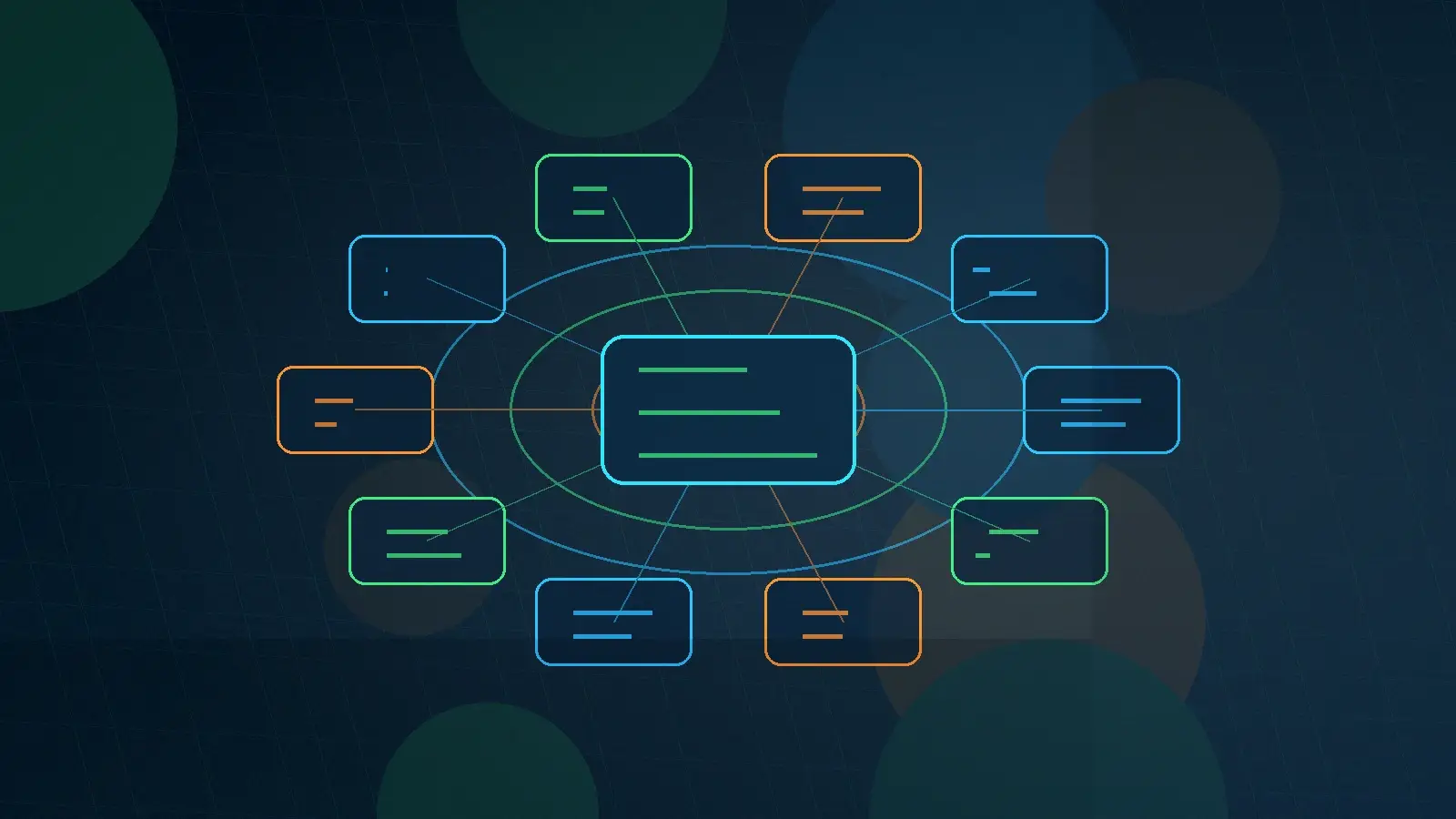

RAG, LLM Orchestration, and Governance

The retrieval layer should not operate alone. LLM orchestration determines which model receives the retrieved context, how the prompt is assembled, what tools can be used, and whether the output requires review. Governance determines which users, models, data sources, and workflows are approved.

Knowledge Spaces combines these concerns into a governed middleware layer for enterprise and government AI. Review the Knowledge Spaces white paper for a deeper view of the control-layer pattern.

Government and Contractor Use Cases

- Solicitation and proposal knowledge bases for capture teams.

- Policy and procedure assistants for program offices.

- Compliance and audit-support workflows for government contractors.

- Contract, travel, and finance knowledge retrieval tied to approved sources.

- Mission-support knowledge systems where citation and access control matter.

What to Measure Before Launch

- Retrieval precision and recall against known-answer questions.

- Citation accuracy and source traceability.

- Permission enforcement by user role and source system.

- Latency, cost, and model routing performance.

- User feedback patterns and escalation rates.

Sprinklenet builds RAG pipelines as part of broader AI integration services, combining retrieval architecture, LLM orchestration, governance, and implementation support.