AI governance becomes real when it is built into the system. Policies, committees, and acceptable-use memos matter, but they do not control what a model retrieves, which tool it calls, who can see a document, or whether an answer is logged for review.

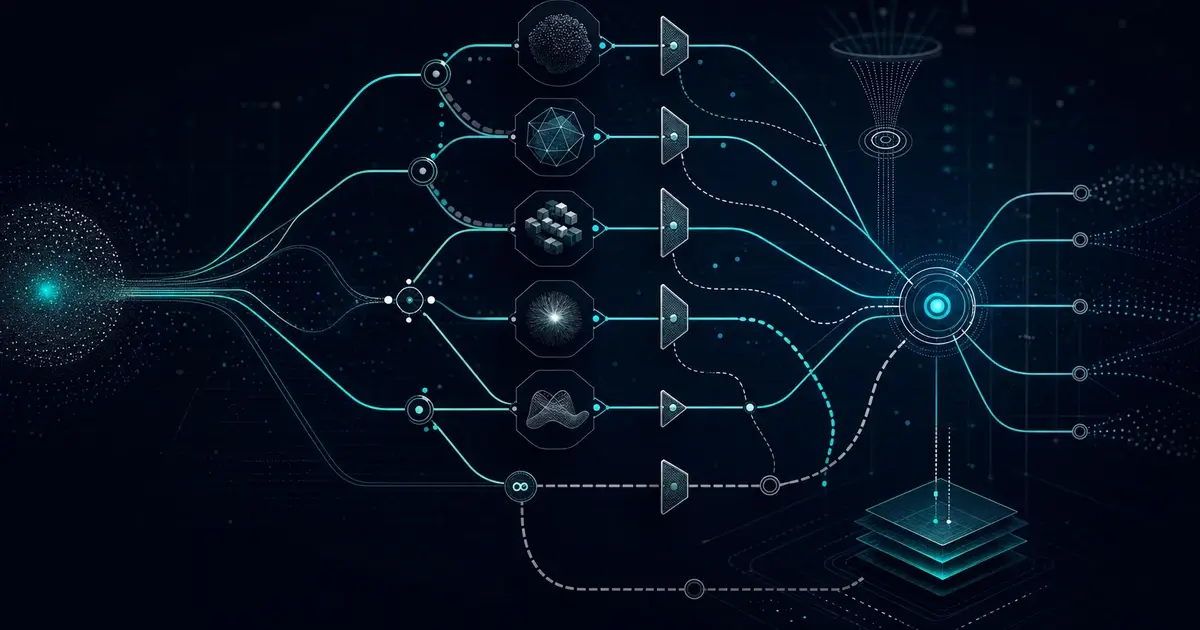

An AI governance platform gives enterprise and government teams a practical control layer for AI operations. It connects policy to execution through permissions, model configuration, retrieval controls, guardrails, evaluation, audit logs, and human review workflows.

Governance Is an Operating Layer

Governance should not sit outside the AI application as a separate checklist. It should shape how the application works. That includes what data is available, which models are approved, what tasks require review, how outputs are evaluated, and how incidents are investigated.

Sprinklenet positions Knowledge Spaces as this kind of governed middleware layer. The platform helps teams manage multi-model AI, RAG, connectors, audit events, guardrails, and controlled knowledge workflows.

Core Controls in an AI Governance Platform

- Access control: users should only retrieve and generate from information they are allowed to use.

- Model governance: approved models, model routing, model changes, and fallback behavior should be documented and observable.

- Retrieval governance: source systems, chunking, metadata, freshness, and citations need measurable quality controls.

- Prompt and tool governance: prompts, agents, tools, and automation paths should be versioned and reviewed.

- Auditability: questions, retrieved sources, generated answers, tool calls, and user actions should be logged where appropriate.

AI Governance for Government and Contractors

Government environments introduce additional expectations around records, procurement, security, privacy, and mission accountability. Prime contractors and subcontractors also need clear boundaries when AI systems touch customer data, proposal data, pricing, program artifacts, or compliance workflows.

Tools such as FARbot show why controlled AI matters in regulated contracting contexts. The goal is not to replace expert judgment. The goal is to make approved knowledge easier to find, cite, and apply.

What Scalable Governance Looks Like

A scalable governance model avoids two extremes. It does not block every AI workflow behind manual review. It also does not let every team deploy disconnected AI tools with no common controls. The better pattern is a shared platform layer with configurable rules by use case, data type, user role, and risk level.

That platform layer should support experimentation while preserving the ability to review, restrict, improve, and retire AI workflows over time.

Questions for Buyers

- Can administrators see which models, sources, and tools are being used?

- Can access controls follow existing enterprise permissions?

- Are answers evaluated for citation quality and retrieval accuracy?

- Can risky workflows require human review before action?

- Can the organization prove what happened after an AI-assisted decision?

Sprinklenet’s capabilities combine AI advisory, implementation, RAG architecture, and governance platform design for environments where control is part of the requirement.

2026 buyer note

AI governance buyers are moving away from static policy decks and toward platform controls. The question is no longer whether the organization has an AI policy. The question is whether the policy is enforced inside retrieval, model routing, permissions, logging, and review workflows.

A Governance Platform Should Make Decisions Observable

Useful governance gives leaders a record of how AI-supported decisions were made. That means the platform should show which user asked the question, what sources were retrieved, which model generated the answer, what guardrails fired, and whether a human review step changed the result.

This is especially important for government, prime contractor, and regulated enterprise environments where AI outputs may influence procurement, compliance, program management, customer support, or internal policy interpretation.

Minimum Useful Control Set

- Identity-aware retrieval so users cannot retrieve content outside their access scope.

- Model routing policy so sensitive work only uses approved providers and deployment environments.

- Prompt and tool versioning so changes can be reviewed and rolled back.

- Audit events covering retrieval, model calls, tool calls, moderation, configuration changes, and user actions.

- Exception handling for uncertain answers, missing sources, policy conflicts, and review escalation.

Related reading

See AI security in practice for the security layer and Federal AI governance for an agency roadmap.

The Operating Model Behind AI Governance

The platform is only part of the governance problem. Organizations also need an operating model that defines who approves use cases, who owns model configuration, who reviews high-risk outputs, who manages source data quality, and who can change guardrail policy. Without those decision rights, governance becomes a set of documents with no operational force.

A practical operating model usually defines risk tiers by workflow. A low-risk internal drafting assistant may need basic logging and user guidance. A system that supports acquisition, compliance, finance, HR, or mission analysis needs stronger review paths, identity-aware retrieval, source traceability, and incident response. The governance platform should make those tiers enforceable without forcing every use case through the same bottleneck.

What To Avoid

- Policy-only governance: acceptable-use memos do not enforce retrieval permissions or model routing.

- Tool sprawl: disconnected AI apps create inconsistent logging, unclear data handling, and weak administrator visibility.

- One-size-fits-all approval: low-risk experimentation and high-risk operational workflows should not use the same review process.

- Unowned data quality: governance fails when no team owns stale, duplicate, or conflicting source material.

- Invisible model changes: production AI needs a record of model, prompt, retrieval, and policy updates.

The better pattern is a shared governance layer with configurable controls. Teams can move quickly where risk is low, while sensitive workflows inherit stronger controls automatically.

Governance Metrics That Matter

A governance platform should produce evidence that leaders can act on. Useful metrics include active AI workflows by risk tier, unresolved guardrail events, retrieval failures, stale source rates, human review volume, model usage by provider, and incidents tied to prompt, retrieval, or connector changes. These measures help governance teams move from opinion to operations.

The point is not to create more dashboards. The point is to identify where AI use is growing, where controls are working, and where the organization needs better training, source cleanup, or platform configuration.

Jamie Thompson is founder and CEO of Sprinklenet, focused on governed AI implementation, systems integration, and Knowledge Spaces.

His work centers on moving AI from pilot activity into production with clearer retrieval, workflow, evaluation, and audit controls. LinkedIn profile.