RAG system development is one of the most important building blocks in enterprise AI implementation. Retrieval-augmented generation connects language models to approved organizational knowledge so users can ask questions, find evidence, and work from current source material instead of relying only on model memory.

For executives and government decision-makers, RAG is not just a technical pattern. It is a governance pattern. A well-designed RAG system can show where an answer came from, limit retrieval to approved sources, respect permissions, and improve trust in AI-assisted workflows. The Knowledge Spaces white paper explains this control-layer approach in more detail.

RAG Systems vs Fine-Tuning vs Prompting

Prompting is useful for instructions, style, and task framing. Fine-tuning can help when an organization needs consistent behavior or domain-specific output patterns. RAG is different because it gives the model access to external knowledge at the moment of use.

- Use prompting when the model already has enough context and needs better task instructions.

- Use fine-tuning when behavior or output format needs to become more consistent across many examples.

- Use RAG when the AI system must answer from current, approved, or private knowledge sources.

Most enterprise systems use a combination. The prompt defines the role and constraints. The retrieval layer brings in source material. The orchestration layer selects models, tools, guardrails, and review paths.

How RAG Works in Enterprise Environments

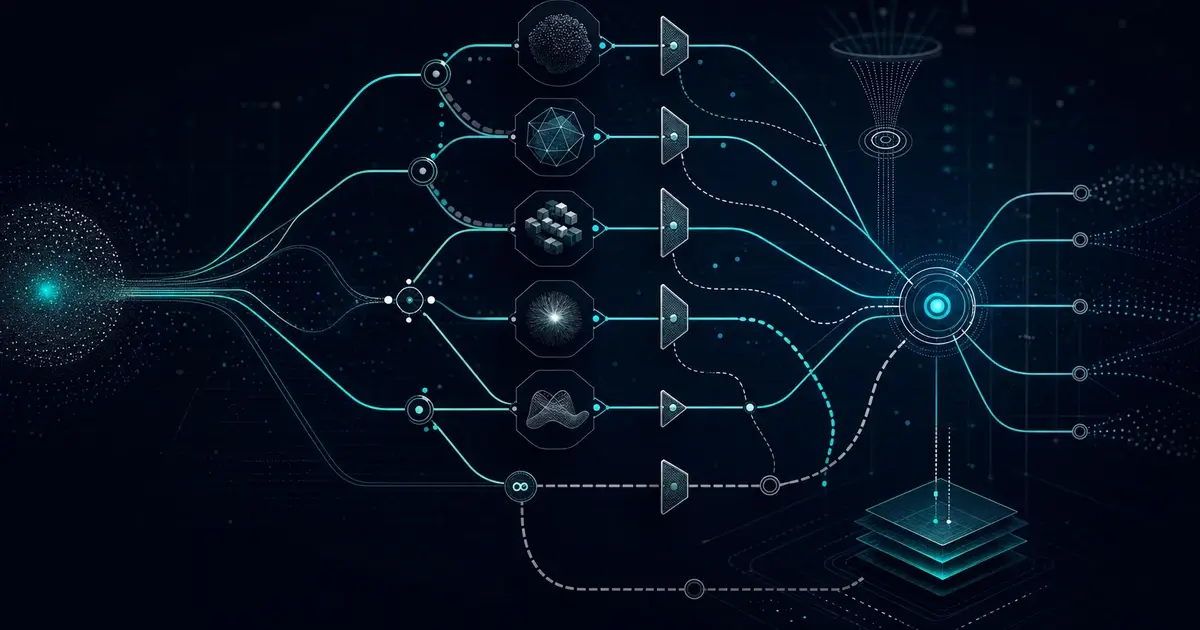

A production RAG system starts with source systems: document repositories, knowledge bases, contract libraries, policy archives, engineering documentation, customer records, or case files. The system ingests these sources, extracts text, preserves metadata, chunks content, generates embeddings, and stores searchable vectors with links back to the original source.

At query time, the system retrieves relevant passages, sends them to the model with task instructions, and returns an answer with citations or supporting context. The quality of that answer depends on the full pipeline, not just the model.

Enterprise and Government Use Cases

- Policy search: helping teams find authoritative guidance across large document sets.

- Proposal support: retrieving past performance, compliance language, and technical capabilities for capture teams.

- Program knowledge: giving analysts and project teams access to current program documentation.

- Contractor compliance: supporting FAR, CAS, travel, expense, and audit-ready data workflows through controlled knowledge retrieval.

- Customer support: grounding assistant answers in approved product, policy, and process documentation.

Why Retrieval Quality Matters

Poor retrieval produces confident answers grounded in the wrong source material. Good RAG system development measures retrieval precision, citation quality, answer accuracy, freshness, access control, and performance under realistic user queries.

Evaluation should include known-answer tests, adversarial queries, permission checks, stale-data checks, and human review. Without this testing layer, RAG becomes a demo pattern rather than a production system.

Where Sprinklenet Fits

Sprinklenet builds RAG systems as part of broader AI integration services. Knowledge Spaces serves as a governed middleware layer for retrieval, model orchestration, audit logs, permissions, and controlled data integration. That makes RAG part of an operating model rather than a standalone feature.

What Makes RAG Development Harder Than It Looks

RAG demos are easy because the data is usually clean, small, and intentionally selected. Enterprise repositories are different. They contain duplicate files, scanned PDFs, inconsistent folder structures, outdated versions, broken tables, missing metadata, and documents with overlapping authority. The RAG system has to handle that reality without giving users false confidence.

The development work therefore has to include more than embeddings. Teams need document parsing strategy, metadata enrichment, source authority rules, access-control mapping, update schedules, and a process for retiring stale content. They also need a way to identify gaps: questions users ask that the knowledge base cannot answer well.

Evaluation Plan for Enterprise RAG

- Known-answer testing: compare system answers against questions with verified source material.

- Citation review: check whether the cited passages actually support the claim.

- Permission testing: verify that users cannot retrieve content outside their authorization scope.

- Freshness testing: ensure outdated documents do not outrank current authoritative sources.

- Negative testing: confirm the system says it does not know when the answer is missing.

These tests should become part of the operating cadence. RAG quality improves when the team treats retrieval as a living system rather than a one-time indexing job.

Data Preparation Workstream

Most RAG projects benefit from a dedicated data preparation workstream. That workstream inventories source systems, identifies authoritative collections, removes duplicates, normalizes file names and metadata, captures document ownership, and defines update rules. It also determines which content should not be indexed at all.

This work is not glamorous, but it is where many RAG systems either gain or lose trust. If outdated policy, draft language, and approved guidance all sit in the same index with no metadata distinction, users will eventually receive answers grounded in the wrong source.

Deployment Patterns

Some teams start with a single knowledge assistant for one document collection. Others need a reusable platform that supports multiple spaces, each with its own sources, users, permissions, and evaluation set. The second pattern takes more planning, but it is usually better for enterprise and government environments because it creates a repeatable way to add new use cases without rebuilding the architecture.

That repeatability is where RAG becomes a platform capability. Once ingestion, retrieval, permission enforcement, citation review, and evaluation are standardized, new knowledge collections can launch faster while still using the same governance model.