Bottom line: Federal AI policy is no longer about memos and frameworks. It is about how you architect, deploy, and govern LLMs in production – and the contractors that understand this at the model level will win the work.

Federal AI policy now shapes how agencies evaluate vendors, how prime contractors structure AI teams, and how every contractor delivering AI is expected to document, govern, and operate systems in practice. The firms that treat policy as a compliance checkbox – rather than an engineering discipline – will lose ground to the ones that can translate requirements into governed model deployments.

The mistake most firms make is assuming policy only matters at the proposal stage. In reality, federal AI policy increasingly drives architecture decisions: which foundation models you select (OpenAI vs. Claude vs. Gemini vs. Llama), how you handle data residency and model routing, how you implement retrieval-augmented generation with proper source grounding, and how you maintain audit trails across every LLM interaction. This is not abstract governance work. It is engineering.

What Federal Buyers Actually Care About

Federal procurement teams are not asking vendors to recite executive orders. They are asking operational questions that directly map to how you build and run AI systems:

Model Governance

Which LLMs are you using? Can you swap models without redesigning the system? Can you enforce per-model guardrails – content filtering on OpenAI, different token limits on Claude, restricted tool access on open-source models like Llama?

Explainability & Grounding

Can every AI response be traced to approved source documents? RAG is not optional in federal environments – hallucination is a compliance event. Buyers expect citation, attribution, and source linking on every response.

Data Sovereignty

Where does the data go when it hits the model? Stateless processing, no LLM training on government data, FedRAMP-authorized infrastructure, and the ability to run on-prem or air-gapped when required.

Audit & Accountability

Can you log every prompt, every response, every model selection decision, every document retrieved? 64+ auditable event types is the baseline, not the ceiling. Programs need artifacts that survive turnover, reviews, and IG audits.

Policy-Adaptive Architecture

Federal AI policy is still evolving rapidly. When a new executive order changes evaluation requirements or an agency updates its AI governance framework, can your system absorb the change without a rebuild? Model-agnostic platforms with configurable guardrails win here.

Where Policy Meets the Model Layer

Here is what most contractors miss: federal AI policy does not live in a separate universe from model selection and system architecture. The policies intertwine directly with technical decisions that were previously considered “just engineering.”

Model routing is a policy decision. When an agency requires that no data leave U.S. infrastructure, that immediately constrains which API endpoints you can use. OpenAI’s Azure Government deployment behaves differently than their commercial API. Anthropic’s Claude operates through AWS Bedrock in GovCloud. Google’s Gemini has its own Vertex AI government pathway. Open-source models like Meta’s Llama and Mistral can run on-premises with full data sovereignty, but require operational infrastructure your team needs to stand up and maintain. Choosing the right model for a given workflow is not just a performance question – it is a compliance question.

RAG architecture is a governance architecture. Retrieval-augmented generation is not just a technique for reducing hallucination. In federal environments, it is the mechanism through which you enforce source grounding, control what the AI is allowed to know, and create an auditable chain from question to answer to source document. The quality of your chunking strategy, your embedding model selection (dense vs. sparse vs. hybrid), and your retrieval evaluation pipeline directly determine whether your system can pass a compliance review.

Guardrails are not optional features. PII detection, prompt injection prevention, content moderation, allowed topics, and output constraints are not nice-to-have layers you add at the end. In federal AI delivery, they are foundational requirements. When an agency asks “can the system be governed?” they are asking whether you built governance into the architecture or bolted it on as an afterthought.

The real shift: Federal buyers are not evaluating “AI capabilities” in the abstract. They are evaluating whether you can deploy Claude or OpenAI or Gemini in a way that is governed, grounded, auditable, and operationally sustainable inside their specific security and compliance environment.

Where Contractors Get This Wrong

Many firms still position AI delivery as a model performance challenge. They lead with benchmark scores, demo impressive outputs, and talk about “advanced” capabilities. This is incomplete for federal work. The harder problem is connecting model behavior to governance frameworks and operational controls.

A contractor might demonstrate strong performance with OpenAI in a sandbox demo and still have no answer for what happens when the agency shifts to a different model provider – whether driven by cost, evolving performance benchmarks, or changing geopolitical and procurement dynamics around AI companies. They might show beautiful RAG results but have no evaluation pipeline to measure retrieval quality as models and data evolve over time. They might pass a security review but have no plan for when a data classification change requires moving from a commercial API to an on-premises deployment where only open-source models like Llama are viable.

This is where implementation depth becomes decisive. A government-ready AI delivery team must simultaneously address security, compliance, and deployment constraints alongside multi-model orchestration, retrieval pipeline engineering, and production monitoring. If those topics are split across too many vendors and handoffs, programs slow down and stakeholder confidence erodes.

How Policy Changes What “Good” Looks Like

Regulatory pressure is redefining quality in federal AI delivery. Buyers increasingly expect vendors that can support:

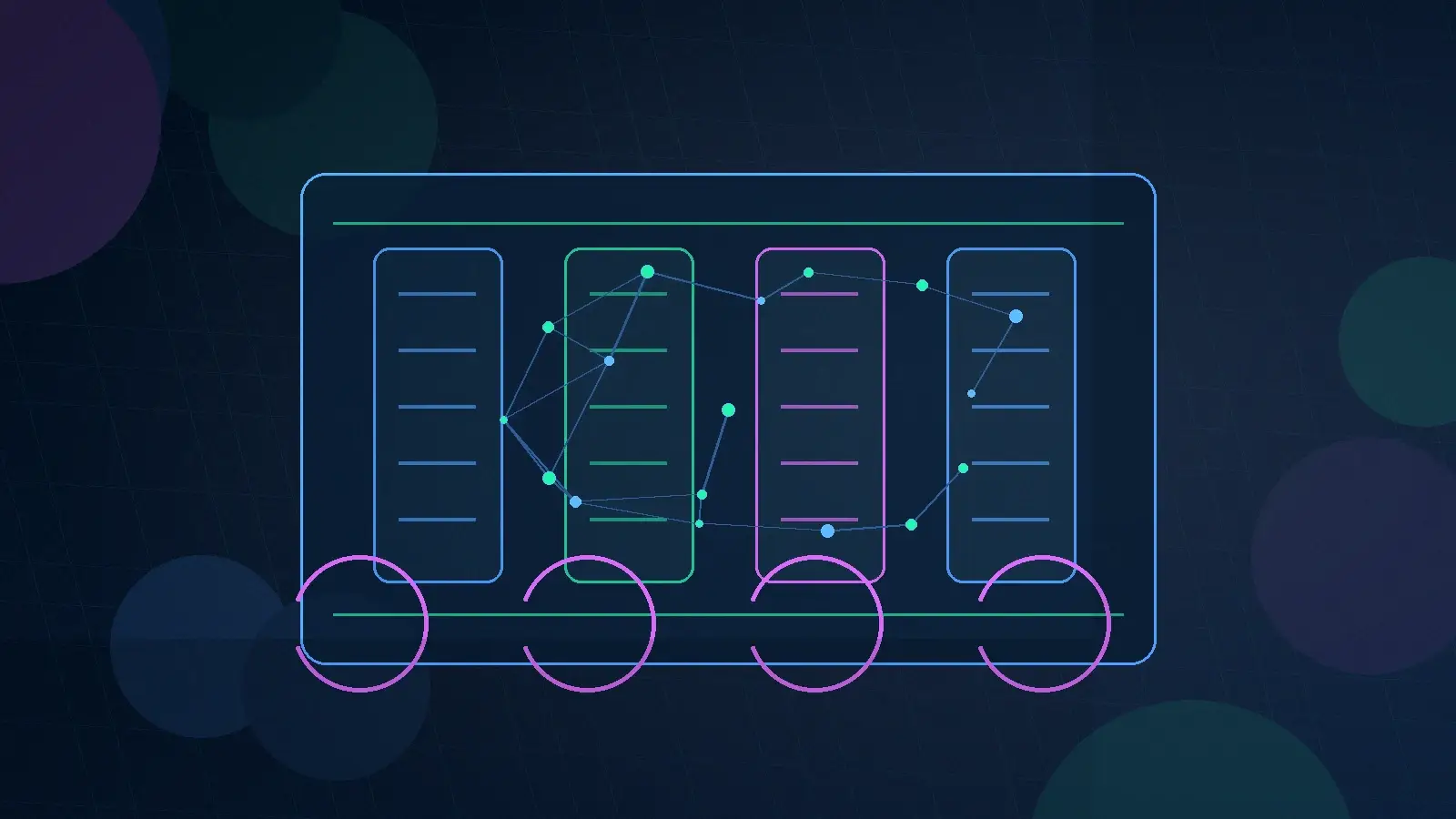

- Multi-model orchestration – routing queries across OpenAI, Anthropic, Google, xAI, Groq, and open-source models based on use case, data sensitivity, and cost

- RAG with source attribution – every response grounded in approved documents with citations, not just “the model said so”

- Pre-release evaluation protocols – systematic testing of retrieval quality, response accuracy, and guardrail effectiveness before deployment

- Access-aware retrieval – role-based controls that determine which documents and data sources each user’s AI interactions can draw from

- Comprehensive logging – prompt, response, model used, documents retrieved, guardrails triggered, and user identity captured on every interaction

- Human oversight workflows – clear escalation paths and review mechanisms for high-impact AI-assisted decisions

- Architecture that absorbs policy changes – configurable guardrails, model-agnostic routing, and governance rules that can be updated without code changes

The value proposition has shifted from generic AI enthusiasm toward governed, production-grade implementation. Contractors that can connect model orchestration, retrieval engineering, and policy compliance into a single coherent delivery will be easier to trust and easier to buy.

What Strong Contractors Do Differently

The strongest federal AI contractors do not wait for a compliance review at the end of a sprint. They build with governance as a first-class architectural concern. They define which models are approved for which workflows, what evidence needs to be retained, how guardrails are configured and updated, and how users stay inside approved boundaries – all before the first line of application code.

They also resist the temptation to promise a universal AI layer when the real need is a narrower, high-value workflow that can be controlled well. A single well-governed RAG bot that helps contracting officers navigate the FAR is worth more than a sprawling “enterprise AI platform” that nobody trusts with real decisions.

This is where an operational control layer becomes valuable. Instead of treating model orchestration, retrieval pipelines, guardrail engines, and audit logging as scattered custom development, mature firms deploy reusable platforms – like Knowledge Spaces – that enforce routing, traceability, and governed access across every production AI workflow. Build once, deploy many, govern everything.

Positioning Your Firm for Federal AI Work

If your firm wants to be taken seriously for federal AI contracts, stop leading with transformation language and start leading with delivery specifics. Show buyers that you understand the difference between deploying OpenAI in a sandbox and operating a governed multi-model pipeline in a FedRAMP environment. Show that you know what happens when an embedding model needs to be swapped, when a guardrail rule needs to be updated, or when an agency’s AI governance framework evolves mid-program.

A clean capabilities posture matters. Federal buyers and prime contractors want to know: how quickly can you stand up a governed AI capability on our program? What models do you support? What does your security and compliance story look like? Can you operate inside our existing infrastructure constraints?

For prime contractors managing portfolios of federal programs, the calculus is straightforward: you do not need to become an AI company to deliver AI capabilities. You need a trusted AI strategy partner who can serve as a fractional Chief AI Officer – someone who brings a production-ready platform, multi-model orchestration expertise, and the governance discipline so your existing teams can deliver AI-powered results without building an AI practice from zero.

Add Production-Ready AI to Your Federal Programs

Sprinklenet works with prime contractors and agencies as a fractional Chief AI Officer and AI strategy partner. We bring governed platforms, multi-model orchestration, and delivery discipline to your teams – so you can offer production-grade AI without building from scratch.

Sprinklenet is an AI strategy, advisory, implementation, and systems integration firm serving government teams, prime contractors, and regulated enterprises. We serve as a fractional Chief AI Officer for select programs – bringing our Knowledge Spaces platform (16+ LLMs, RAG, enterprise security, 4-week deployment), multi-model orchestration expertise, and hands-on AI governance to teams that need production-ready capabilities.