Every organization considering AI deployment faces a fundamental tension: AI systems become more useful as they access more data, but more data access means more security risk. This tension is not theoretical. The history of enterprise technology is littered with examples of powerful tools that delivered great value while creating security vulnerabilities that were only discovered after damage was done. AI does not have to follow that pattern, but avoiding it requires deliberate security design from the very beginning.

The security challenges of AI are distinct from those of traditional enterprise software. AI systems ingest, process, and reason about data in ways that create novel attack surfaces. They can inadvertently memorize sensitive information from training data. They can be manipulated through carefully crafted inputs. And they can leak information through their outputs in ways that are subtle and difficult to detect. Understanding these risks is the first step toward managing them effectively.

The AI-Specific Threat Landscape

Traditional cybersecurity focuses on protecting data at rest and in transit – encryption, access controls, network segmentation, and monitoring. AI systems introduce a third state: data in use by a model. When an AI system processes a query against a document repository, it is actively reasoning about potentially sensitive content, and the outputs of that reasoning need to be just as carefully controlled as the inputs.

Prompt injection is one of the most discussed AI-specific threats. An attacker embeds malicious instructions within data that an AI system processes, attempting to make the system behave in unintended ways – disclosing information it should not, ignoring access controls, or producing misleading outputs. This is particularly concerning in enterprise environments where AI systems process documents from multiple sources with varying levels of trust.

Data leakage through AI outputs is a subtler risk. An AI system trained on or with access to sensitive documents might include confidential information in its responses to seemingly innocuous queries. A question about “general company policy” might generate an answer that inadvertently references a specific confidential negotiation if the AI has access to those documents. Preventing this requires careful attention to how AI systems select and present information from their knowledge bases.

Model inversion and extraction attacks attempt to reconstruct training data or steal proprietary models by analyzing the system’s outputs. While these attacks are more relevant to custom-trained models than to retrieval-augmented generation systems, any organization deploying AI should understand the risk landscape and take appropriate precautions.

Security Architecture for Enterprise AI

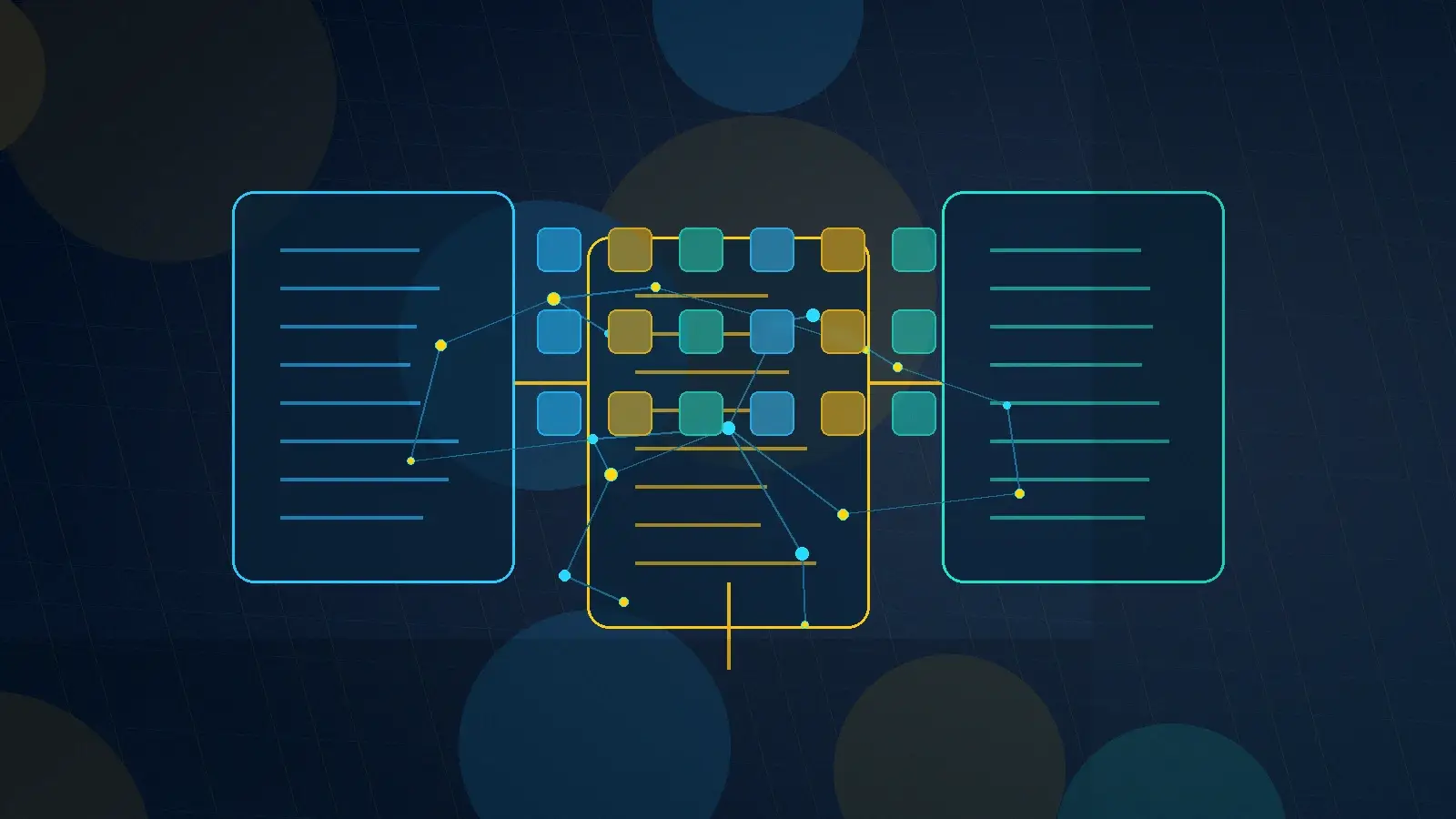

Securing enterprise AI starts with architecture decisions that embed security into the system’s foundation rather than bolting it on after deployment. The most critical decision is how the AI system accesses data. Retrieval-augmented generation architectures, where the AI searches a document repository at query time rather than being trained on the data, offer significant security advantages because the data remains in controlled repositories rather than being absorbed into the model.

Access control enforcement must happen at the retrieval layer, not just the presentation layer. When a user queries an AI knowledge management system, the retrieval component must filter results based on that user’s permissions before the language model ever sees the content. This is the approach that platforms like Knowledge Spaces use to ensure that AI responses respect existing information access policies. If the AI model receives unauthorized content and then tries to filter it at the output stage, there is always a risk that sensitive information will leak through.

Network architecture matters too. For government and high-security environments, AI systems may need to operate within air-gapped networks, government cloud environments (GovCloud), or on-premise infrastructure. The deployment model – cloud, hybrid, or on-premise – should be driven by security requirements, not convenience. Any AI vendor that cannot accommodate your deployment requirements is not suitable for your environment, regardless of how impressive their demo is.

Practical Security Controls

Beyond architecture, several practical security controls should be standard in any enterprise AI deployment. Input validation and sanitization help prevent prompt injection attacks by filtering or flagging suspicious content before it reaches the AI model. Output filtering scans AI responses for patterns that might indicate data leakage, such as email addresses, account numbers, or classification markings that should not appear in the user’s context.

Comprehensive logging is essential for both security monitoring and compliance. Every query, every retrieval result, and every AI output should be logged with sufficient detail to support forensic analysis if a security incident occurs. These logs also serve governance purposes, providing an audit trail that demonstrates how AI systems are being used and what information they are accessing.

Rate limiting and anomaly detection help identify potential abuse. If a user suddenly submits hundreds of queries targeting a specific topic or systematically probing for sensitive information, the system should flag this behavior for review. Normal usage patterns are relatively predictable; significant deviations warrant investigation.

Regular security assessments should include AI-specific testing. Traditional penetration testing covers network and application security, but AI systems also need adversarial testing – deliberate attempts to manipulate the AI through prompt injection, extract sensitive information through carefully crafted queries, or bypass access controls through indirect means. The security engineering required for AI systems requires specialized expertise that goes beyond traditional cybersecurity.

The Human Factor

Technology controls are necessary but not sufficient. The human element of AI security is equally important. Users need to understand what they should and should not share with AI systems. Administrators need to know how to configure access controls correctly. And security teams need to understand AI-specific threats well enough to detect and respond to them.

Training should be practical rather than theoretical. Instead of abstract lectures about AI risk, show users real examples of what can go wrong – sanitized case studies of AI data leakage, demonstrations of prompt injection, and clear guidelines for responsible AI use in their specific work context. Make it easy to do the right thing and difficult to do the wrong thing.

Incident response plans need to be updated for AI-specific scenarios. What happens when an AI system produces an output that contains classified information? Who investigates, how is the exposure assessed, and what remediation steps are required? Having these procedures documented and practiced before an incident occurs is the difference between a managed event and a crisis.

Security as an Enabler

The strongest argument for investing in AI security is that it enables broader, more valuable AI adoption. Organizations that lack confidence in their AI security posture tend to restrict AI access to low-value use cases – experimenting with chatbots for FAQs while keeping AI away from the sensitive, high-value data where it could deliver the most impact.

When security is built into the foundation, organizations can confidently deploy AI against their most important knowledge assets. They can use AI to search classified documents, analyze sensitive contracts, or process confidential personnel records – applications that deliver enormous value but require ironclad security controls.

The goal is not to prevent AI adoption in the name of security. It is to build security capabilities that allow AI to be deployed at full potential, against the data that matters most, with confidence that sensitive information is protected. That is the real return on AI security investment.