Every quarter, the Sprinklenet team sits down with CIOs and CFOs who are trying to figure out what AI will actually cost their organization. The conversation almost always starts in the same place: model API pricing. What do frontier model APIs cost per token? What about specialized reasoning models or open-weight models? Can we run selected models on our own infrastructure for less?

These are reasonable questions. They are also the wrong starting point. Model API costs typically represent 15 to 20 percent of a well-executed AI program’s total spend. The other 80 percent – integration, data engineering, governance, change management – is where budgets either hold or collapse.

This guide breaks down what AI actually costs in year one, where the money goes, and how to plan for it without surprises.

The Budget Conversation Most Organizations Get Wrong

The most common budgeting mistake is treating AI like a software license. Organizations allocate funds for the tool – the model, the platform, maybe some cloud compute – and assume the rest will fit into existing operational budgets. It rarely does.

AI is not a product you install. It is a capability you build into your organization. That means the real cost drivers are the work surrounding the model: preparing your data so the model can use it, connecting AI outputs to the systems your people already rely on, establishing governance so the organization trusts the results, and training teams to actually change how they work.

When the Sprinklenet team helps organizations plan their first AI budget, the goal is to account for all five categories of spend – not just the one that shows up on a vendor invoice.

The Five Budget Categories

1. Infrastructure and Platform Costs (15 – 20% of Total)

This is the category most teams budget for first, and it is the smallest share of total spend. Infrastructure includes:

- Cloud compute and storage – GPU instances for inference or fine-tuning, object storage for documents, and the networking to connect it all.

- Model API subscriptions – Pay-per-token costs for commercial models (OpenAI, Anthropic, Google) or hosting costs for open-source alternatives.

- Vector databases and retrieval infrastructure – The backbone of any retrieval-augmented generation (RAG) system, where your organization’s knowledge gets indexed and searched.

- Platform licensing – If you adopt a managed AI platform rather than building from scratch, this is where that cost lives.

For a mid-size organization running its first production AI workload, expect infrastructure costs between $3,000 and $15,000 per month depending on scale and model selection. These costs are predictable and tend to decrease over time as you optimize.

2. Integration and Data Engineering (30 – 40% of Total)

This is the largest budget category and the one most often underestimated. AI models are only as useful as the data they can access and the systems they can interact with. Integration work includes:

- Data pipeline development – ETL processes that move data from source systems into formats the AI can consume. This includes document parsing, metadata extraction, and data normalization.

- API development and system connectors – Building the bridges between your AI capability and the enterprise systems it needs to read from or write to (CRM, ERP, case management, document management).

- Data quality and preparation – Cleaning, deduplicating, and enriching data. For organizations with decades of accumulated documents and records, this is significant work.

- Security integration – Ensuring AI components work within your existing identity management, network security, and access control frameworks.

This category is front-loaded. Expect to spend 60 to 70 percent of your integration budget in the first four months. After that, integration work shifts to maintenance and incremental expansion.

3. AI Development and Configuration (15 – 20% of Total)

This is the work that makes the AI actually perform well for your specific use cases:

- Model selection and evaluation – Testing multiple models against your actual data and requirements to find the right fit for each task.

- Prompt engineering and optimization – Developing, testing, and refining the instructions that guide model behavior. This is iterative work that requires domain expertise.

- RAG system design – Configuring retrieval strategies, chunking approaches, and re-ranking logic so the AI pulls the right context from your knowledge base.

- Testing and validation – Building evaluation frameworks that measure accuracy, relevance, and safety against ground truth. The Sprinklenet team recommends using a structured assessment approach like the AI Scorecard to benchmark capabilities before and after deployment.

4. Governance and Compliance (10 – 15% of Total)

For government agencies and regulated industries, this category is non-negotiable. For DoW organizations operating under emerging AI directives, governance is a foundational requirement, not an afterthought. Budget for:

- Policy development – Acceptable use policies, data handling procedures, and AI-specific security controls aligned with NIST AI RMF or agency-specific frameworks.

- Audit and monitoring infrastructure – Logging, traceability, and reporting systems that track what the AI does, what data it accesses, and what outputs it produces.

- Risk assessment and testing – Red-teaming, bias evaluation, and ongoing performance monitoring.

- Compliance documentation – Authority to Operate (ATO) packages, privacy impact assessments, and other documentation required for production deployment in federal environments.

Governance costs are relatively steady across the year, with a slight front-loading during initial policy development.

5. Change Management and Training (10 – 15% of Total)

The best AI system in the world delivers zero value if nobody uses it. Change management is the bridge between a working technical capability and actual organizational impact:

- Workflow redesign – Mapping existing processes and identifying where and how AI fits in. This is collaborative work with the people who do the work today.

- User training and enablement – Hands-on training sessions, documentation, and office hours that help users build confidence with new tools.

- Champion networks – Identifying and supporting early adopters who can model effective AI use for their peers.

- Ongoing support – Help desk capacity, feedback loops, and iterative refinement based on real user experience.

Demystifying Model API Costs

Token-based pricing confuses most executives the first time they see it, so here is the short version. Large language models charge by the token – roughly three-quarters of a word. You pay separately for input tokens (what you send to the model) and output tokens (what the model generates). Output tokens typically cost three to four times more than input tokens.

A typical enterprise query – sending a document chunk plus a question and receiving a paragraph-length answer – might cost $0.01 to $0.05 per interaction using a frontier model. At scale, that adds up, but it is manageable with the right architecture.

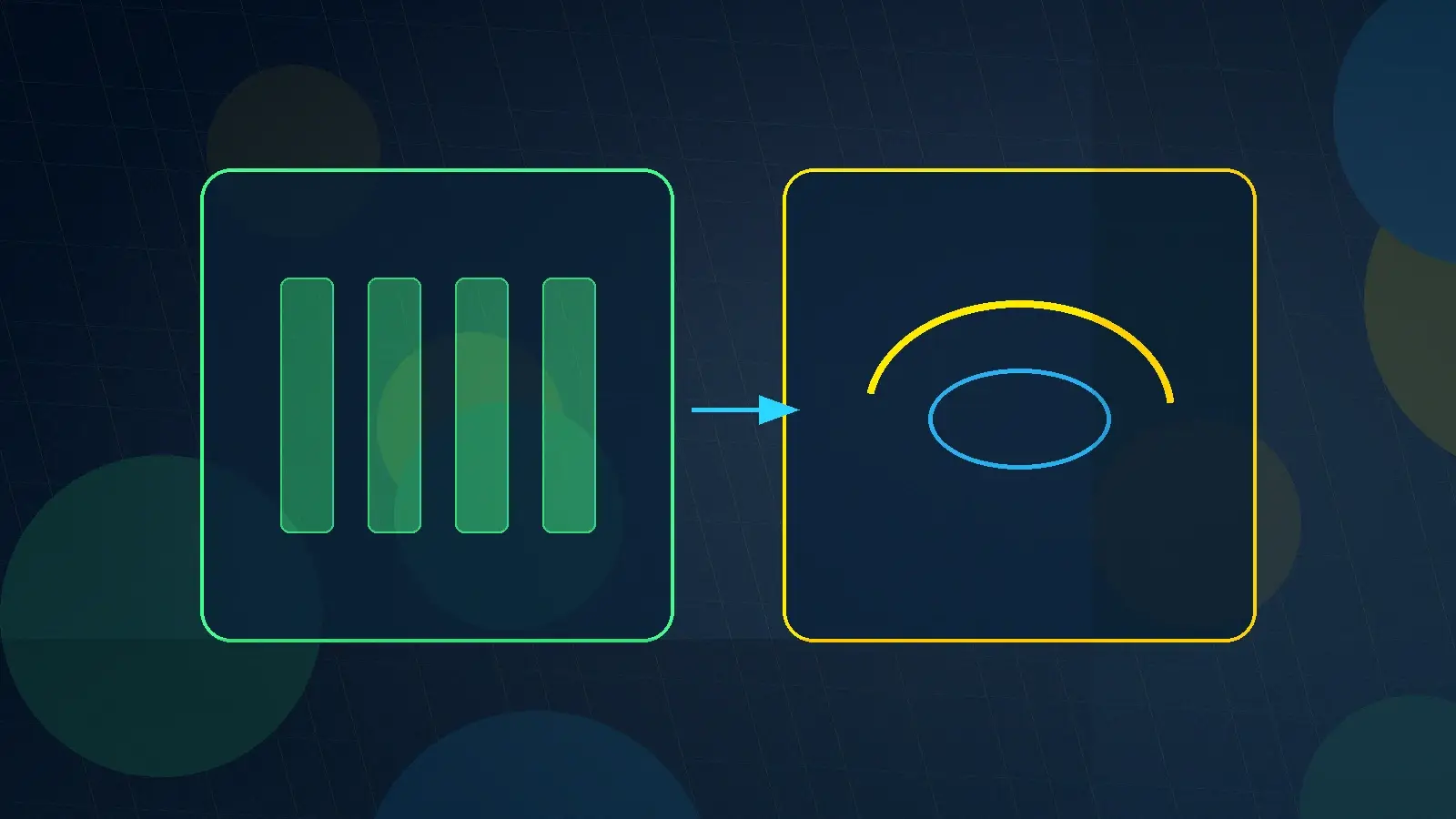

The most effective cost control strategy is multi-model routing. Not every task requires a frontier model. Simple classification, extraction, and summarization tasks can be handled by smaller, cheaper models at a fraction of the cost. A well-designed routing layer sends each request to the least expensive model capable of handling it. The Sprinklenet team has seen this approach reduce model API costs by 5 to 10 times compared to routing everything through a single frontier model.

Build vs. Buy: The Total Cost of Ownership Decision

Every organization faces this question: should we build our own AI infrastructure or adopt a managed platform?

Building in-house gives you maximum control and customization. It also means hiring and retaining AI engineers (current market rate: $180,000 to $280,000 per year), maintaining infrastructure, keeping up with a model landscape that changes monthly, and accepting that your first production deployment is likely 6 to 12 months away. Total year-one cost for a small in-house team: $800,000 to $1.5 million before you serve your first user.

Adopting a managed platform compresses time to value and shifts ongoing maintenance to the vendor. You trade some customization flexibility for faster deployment (weeks, not months) and predictable costs. A managed platform engagement typically runs $200,000 to $600,000 in year one for comparable capability, with the option to expand as you prove value.

For organizations considering fractional AI leadership, a middle path often makes sense: bring in experienced leadership to guide strategy while leveraging a managed platform for execution. This avoids the overhead of a full-time AI team while still building internal knowledge.

Year One Timeline and Spend Pattern

AI budgets are not evenly distributed across the year. Here is what a realistic spend pattern looks like:

Months 1 – 2: Foundation (20% of annual spend) – Discovery, data assessment, governance framework, architecture design. This phase is almost entirely people costs. The investment here determines whether everything that follows succeeds or struggles.

Months 3 – 4: Build (40% of annual spend) – Data pipeline development, integration work, initial model configuration, security implementation. This is the most resource-intensive phase. Infrastructure costs start appearing but remain modest compared to labor.

Months 5 – 8: Deploy and Iterate (25% of annual spend) – Production deployment, user training, performance tuning, feedback incorporation. Spend shifts from development to operations and support. This is where early ROI signals appear.

Months 9 – 12: Optimize and Expand (15% of annual spend) – Cost optimization, capability expansion, additional use case development. By this phase, your infrastructure costs are well-understood and your team is identifying new applications based on real experience.

The key takeaway: expect roughly 60 percent of your year-one budget to be consumed in the first six months. This is normal and healthy. Front-loaded investment in data preparation and integration pays dividends in faster, smoother deployment later.

Setting Realistic ROI Expectations

The Sprinklenet team advises clients to plan for three time horizons when measuring AI return on investment:

3 – 6 months: Early indicators. For well-scoped projects, you should see measurable improvements in task completion time, error rates, or throughput within this window. These are typically 20 to 40 percent improvements in specific workflows – document review, research synthesis, report generation, or data extraction.

6 – 12 months: Operational impact. As adoption grows and the system handles more use cases, look for reductions in process cycle times, improved consistency in outputs, and capacity gains that let your team take on work that was previously backlogged or outsourced.

12 – 24 months: Strategic value. The compounding effect of better data, trained users, and refined models starts producing capabilities that were not possible before – predictive insights, automated workflows, and decision support that fundamentally changes how the organization operates.

Be skeptical of any vendor promising ROI in weeks. Real organizational value takes time to build and even longer to measure accurately. Focus your early metrics on time savings (hours recovered per week per user), error reduction (defect rates in AI-assisted vs. manual processes), and throughput (volume of work completed per period).

How Sprinklenet Structures AI Engagements

The Sprinklenet team believes AI budget planning should be transparent and predictable. Every engagement starts with a scoping phase that maps your data landscape, identifies high-value use cases, and produces a detailed budget estimate broken across all five categories described above.

Delivery is phased. Each phase has defined outcomes, a fixed timeline, and a clear decision point before the next phase begins. This lets organizations validate value incrementally rather than committing an entire year-one budget on day one.

For federal government clients, Sprinklenet holds a GSA Multiple Award Schedule contract with established rates for AI engineering, data science, and advisory services. GSA MAS pricing provides a streamlined procurement path and pre-negotiated rates that simplify the budgeting process.