AI features in mobile apps create new demands around latency, privacy, offline behavior, and user trust. A credible mobile AI feature starts with the workflow, then chooses the right combination of on-device logic, cloud inference, retrieval, permissions, and human review.

Executive Takeaway

- A credible mobile AI feature starts with the workflow, then chooses the right combination of on-device logic, cloud inference, retrieval, permissions, and human review.

- The implementation should be judged by workflow value, evidence quality, security boundaries, and operational ownership.

- Executives should expect a clear path from discovery to pilot, then from pilot to governed production.

Why This Matters

This is especially important for mobile product teams adding AI capabilities to customer-facing apps. Senior leaders do not need another demonstration that AI can produce fluent output. They need evidence that the workflow is useful, the data path is credible, the risks are controlled, and the system can be operated after launch.

A polished AI initiative also has to survive scrutiny from security, legal, procurement, finance, and the users doing the work. That is where many thin pilots fail: they look impressive in a controlled demo, but the architecture does not explain how the system will handle messy data, permissions, exceptions, or quality drift.

Architecture Considerations

- separate model interaction from mobile UI code

- limit data sent from the device

- measure latency under realistic network conditions

- design fallback states for failed model calls

Product Questions

- What user decision improves?

- What data is required?

- What should happen when the answer is uncertain?

Executive Review Lens

When a senior team reviews this kind of initiative, the discussion should move quickly from possibility to evidence. The team should be able to explain what workflow changes, what information the system uses, what controls prevent misuse, and what metric proves the work is worth continuing.

The review should also separate strategy from implementation. Strategy defines the problem, value, risk tolerance, and ownership. Implementation proves the data path, integration approach, release process, and support model. If those two tracks are not connected, the project can look aligned in a slide deck and still fail in production.

Signals Of Quality

- the workflow is narrow enough to test with real users

- source systems and permissions are documented before launch

- quality is measured with examples that match the work

- the team knows who will maintain content, prompts, policies, and connectors

What to Avoid

The biggest warning sign is a delivery plan that depends on generic AI capability rather than a specific operating workflow. A credible plan should not rely on vague claims about automation, personalization, or intelligence. It should show how the system will behave when data is incomplete, when a user asks for something outside scope, or when the answer needs human approval.

Leaders should also watch for vendor or internal team proposals that skip evaluation. Without a test set, acceptance criteria, and a feedback loop, the organization cannot tell whether the system is getting better or merely producing more output.

Architecture And Delivery Notes

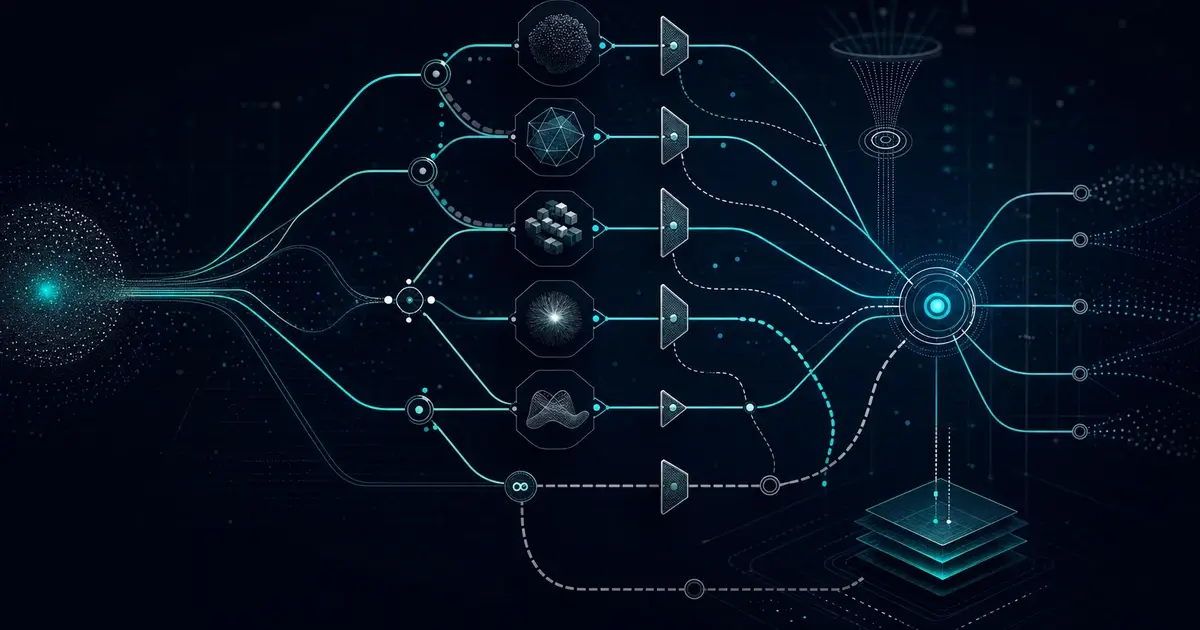

A credible delivery plan should show how source systems connect to the AI layer, how access is enforced, how prompts and policies are versioned, and how quality will be measured. The team should also decide what is reusable after the first release: connectors, evaluation sets, audit events, monitoring dashboards, and handoff documentation.

The strongest programs keep the design simple enough to operate. They start with one workflow, define the human owner, prove the data path, and then expand only after the team can measure accuracy, latency, cost, exception handling, and user value.

Implementation Pattern

A practical implementation should move through four stages. First, define the workflow and the user decision that needs to improve. Second, identify the authoritative source data and the permission model. Third, build a narrow release with evaluation examples that reflect real work. Fourth, hand the system to an owner with monitoring, review, and change-control routines.

This pattern keeps the effort grounded. It prevents teams from confusing a polished prototype with a production system, and it gives executives a clearer view of cost, risk, and reuse before the work expands.

What Compounds Over Time

The best AI programs create reusable assets with every release: connectors, retrieval patterns, guardrail decisions, evaluation sets, prompt and policy versions, and runbook entries. Those assets matter because they reduce the cost and risk of the next use case.

That is also how the brand and operating model improve. The organization becomes known for shipping useful, governed AI systems instead of isolated experiments. The work becomes easier to explain to leadership, procurement, security, and the teams who will use it every day.

Questions to Ask

- What operational decision or workflow improves if this works?

- Which data sources are authoritative, and who owns them?

- What should the system refuse or escalate?

- How will the team know quality has improved after release?

Sprinklenet Perspective

Sprinklenet builds AI systems with the details that matter after the demo: governed retrieval, multi-model orchestration, secure connectors, human review, evaluation, and auditability. See AI services and Knowledge Spaces for more on how we approach production AI work.