Knowledge Spaces is Sprinklenet’s governed enterprise AI platform for teams that need useful AI on real organizational knowledge without losing control of data, policy, model behavior, or auditability.

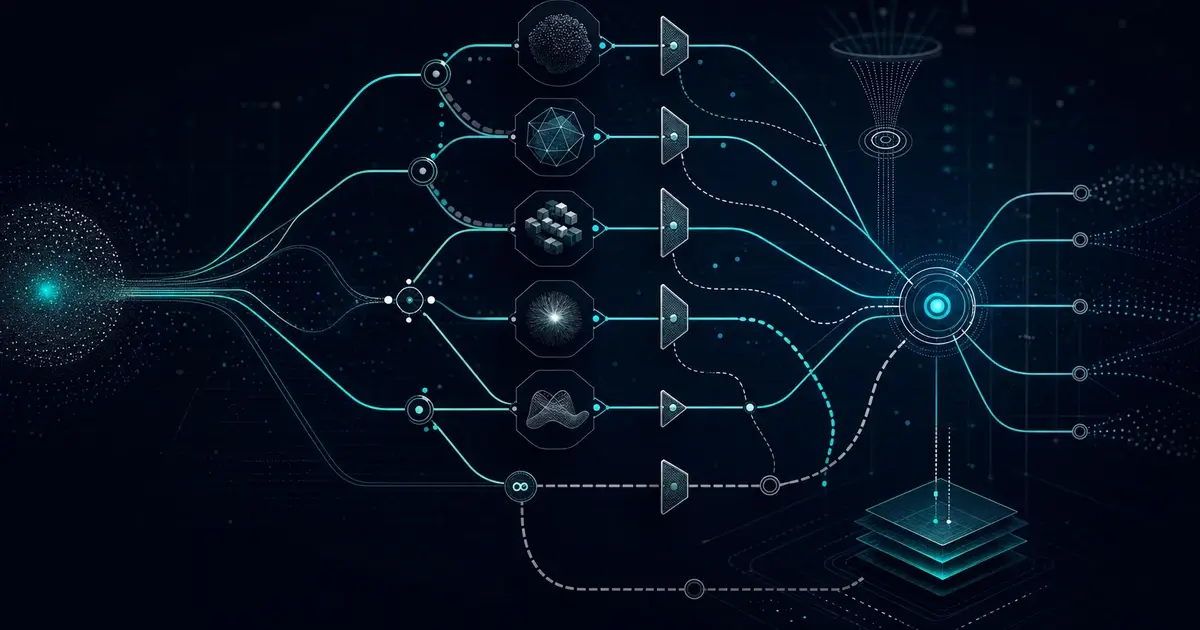

Knowledge Spaces sits between users, enterprise systems, and model providers so organizations can configure AI experiences once and operate them with consistent controls.

- Routes work across current API-driven and self-hosted model families.

- Connects to enterprise data, legacy applications, file repositories, and APIs.

- Grounds responses in approved sources with citations and traceability.

- Supports SSO, role-based access, guardrails, audit logs, and controlled sharing.

What Knowledge Spaces Is

Knowledge Spaces is not a one-off chatbot and it is not a thin wrapper around a single model. It is a managed control layer for configuring, governing, and monitoring AI experiences across an organization.

A Space contains the sources, connectors, model configuration, bot instructions, access controls, and governance rules for a specific knowledge domain. Each bot can then use that Space to answer questions, support workflows, or expose knowledge through an embedded widget, API, or standalone interface.

The goal is simple: let teams use modern AI against trusted enterprise knowledge while keeping the organization in control of what the AI can access, say, cite, and share.

Key Platform Capabilities

Model Orchestration

Configure model families such as Claude, ChatGPT, Gemini, Grok, Llama, Qwen, DeepSeek, and other API-driven or self-hosted options without locking the workflow to one provider.

Grounded Retrieval

Use retrieval and vector search to ground responses in approved documents, records, and structured data with citation and source attribution.

Enterprise Connectors

Connect SharePoint, OneDrive, Salesforce, PostgreSQL, REST APIs, OAuth-secured systems, private data lakes, file repositories, and legacy .NET applications.

Governance Controls

Define allowed topics, disallowed outputs, required disclaimers, escalation rules, review gates, and bot-specific behavior.

Security And Access

Support SAML SSO, CAC/PKI patterns where appropriate, role-based access control, tenant separation, and detailed audit trails.

Operational Monitoring

Track usage, model selection, latency, guardrail events, source coverage, and user feedback so the platform can be managed in production.

Deployment Options

Knowledge Spaces can be deployed as an embedded widget, standalone interface, API-backed service, or platform layer behind a client application. Deployment patterns can target AWS, Azure, Google Cloud, private cloud, containerized infrastructure, or restricted environments depending on the requirements.

Sprinklenet is pursuing FedRAMP authorization. Knowledge Spaces should not be described as FedRAMP authorized until that process is complete. For federal and regulated deployments, the practical focus today is on containerized deployment options, GovCloud-ready patterns, private data boundaries, audit evidence, SSO, RBAC, and documented controls.

Scope The Space

Define the audience, data sources, risk boundaries, use cases, success criteria, and controls before configuring the platform.

Connect Knowledge

Ingest documents, connect enterprise systems, map source permissions, and decide which knowledge should be available to each bot.

Configure Models And Guardrails

Select allowed model families, routing rules, output constraints, review gates, and citation behavior for each workflow.

Validate And Operate

Test against representative questions, review citations, tune prompts and policies, then monitor usage and quality after launch.

Frequently Asked Questions

Getting Started

How long does deployment take?

A narrow pilot can often be configured in weeks when sources, users, and governance rules are clear. Production timelines depend on integration complexity, security review, data readiness, and the number of workflows being launched.

What does a typical engagement include?

Most engagements start with workflow and source mapping, then move into Space configuration, connector setup, bot behavior, guardrail rules, user testing, and production readiness review. The goal is to avoid a demo-only chatbot and ship a governed workflow that people can actually use.

Models And Retrieval

What model families does Knowledge Spaces support?

Knowledge Spaces is model-agnostic. It can be configured with current API-driven and self-hosted model families such as Claude, ChatGPT, Gemini, Grok, Llama, Qwen, DeepSeek, and other models as availability and customer requirements change.

How does multi-model routing work?

Each bot can be configured with allowed model families and routing rules. Routing can consider task type, cost, latency, response format, tool use, or risk profile. This gives teams more control than a single-provider deployment.

How does retrieval-augmented generation work?

Approved sources are indexed for retrieval. When a user asks a question, the platform retrieves relevant passages or records, sends controlled context to the selected model, and returns an answer with source attribution where appropriate.

Enterprise Data And Integrations

What data sources can Knowledge Spaces connect to?

Common sources include SharePoint, OneDrive, Salesforce, PostgreSQL, REST APIs, OAuth-secured applications, file repositories, private data lakes, uploaded PDFs and office documents, and legacy .NET applications. Custom connectors can be scoped when a workflow depends on a proprietary system.

Can Knowledge Spaces connect to legacy systems?

Yes. Many useful enterprise workflows depend on older systems that are not clean SaaS applications. Knowledge Spaces can connect through APIs, database access, file exports, middleware, or custom integration layers depending on security and data architecture.

Can I bring my own API keys?

Yes. Customers can use bring-your-own-key configurations for supported model providers, or they can use Sprinklenet-managed access where that operating model makes more sense.

Security, Compliance, And Governance

Is Knowledge Spaces FedRAMP authorized?

No. Sprinklenet is pursuing FedRAMP authorization, but Knowledge Spaces should not be represented as FedRAMP authorized today. For current regulated deployments, we focus on documented controls, containerized deployment patterns, SSO, role-based access, audit evidence, and deployment environments that match the customer’s requirements.

What guardrails are available?

Administrators can configure PII detection, prompt-injection defenses, topic boundaries, required disclaimers, disallowed outputs, review gates, and escalation rules. Guardrail events are logged so teams can see what the system allowed, blocked, or routed for review.

Can Knowledge Spaces work in restricted or disconnected environments?

Deployment can be designed for public cloud, private cloud, containerized environments, or restricted networks. The exact model and retrieval architecture depends on the customer environment, data sensitivity, and hosting constraints.

How does audit logging work?

Knowledge Spaces logs meaningful platform events such as user access, source changes, bot queries, model selections, guardrail events, permission changes, and administrative activity. These records support operations, compliance review, and incident investigation.

Use Cases

What kinds of workflows fit Knowledge Spaces?

Strong fits include internal knowledge assistants, regulated policy guidance, compliance research, customer support grounded in approved content, proposal research, onboarding, partner portals, analyst workflows, and application-specific AI features built on top of governed knowledge.

Can Knowledge Spaces support agentic or AI-to-AI workflows?

Yes. Knowledge Spaces can expose governed knowledge through APIs so another application or agent can query it as a controlled tool. The same access rules, source boundaries, guardrails, and audit logging still apply.

How is Knowledge Spaces different from ChatGPT or Copilot?

Consumer AI tools are useful, but they do not give every organization the control layer needed for multi-tenant data separation, custom connectors, source-governed retrieval, model routing, workflow-specific guardrails, and detailed auditability. Knowledge Spaces is built for those enterprise operating requirements.

How is Knowledge Spaces different from building a custom RAG system?

A custom RAG build can work for one use case, but teams often underestimate the ongoing work around authentication, permissions, source freshness, evaluation, model changes, logging, and governance. Knowledge Spaces provides a reusable platform layer so each new workflow does not start from scratch.

Related Resources